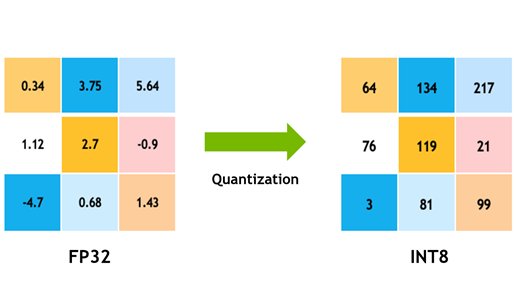

Achieving FP32 Accuracy for INT8 Inference Using Quantization Aware Training with NVIDIA TensorRT | NVIDIA Technical Blog

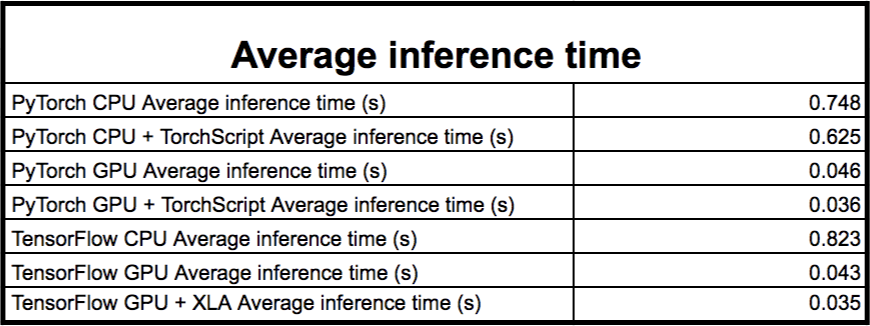

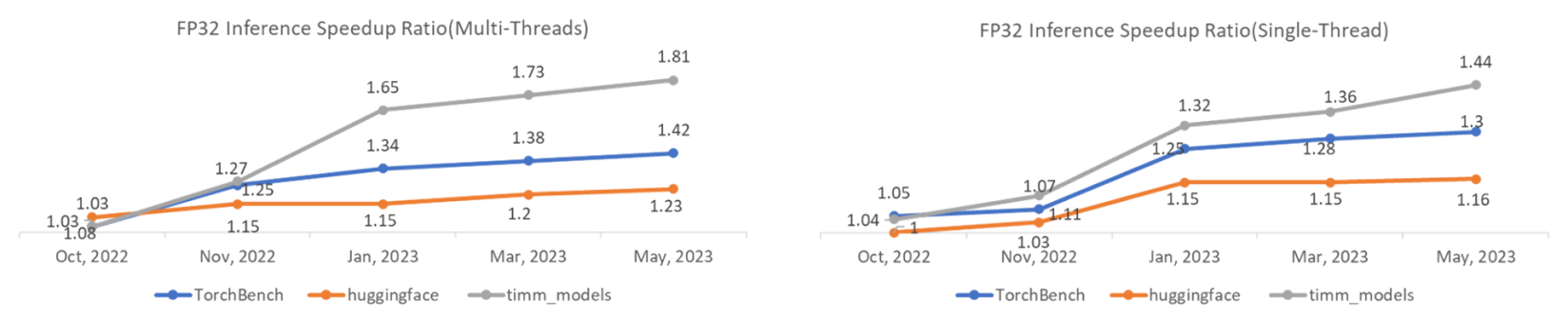

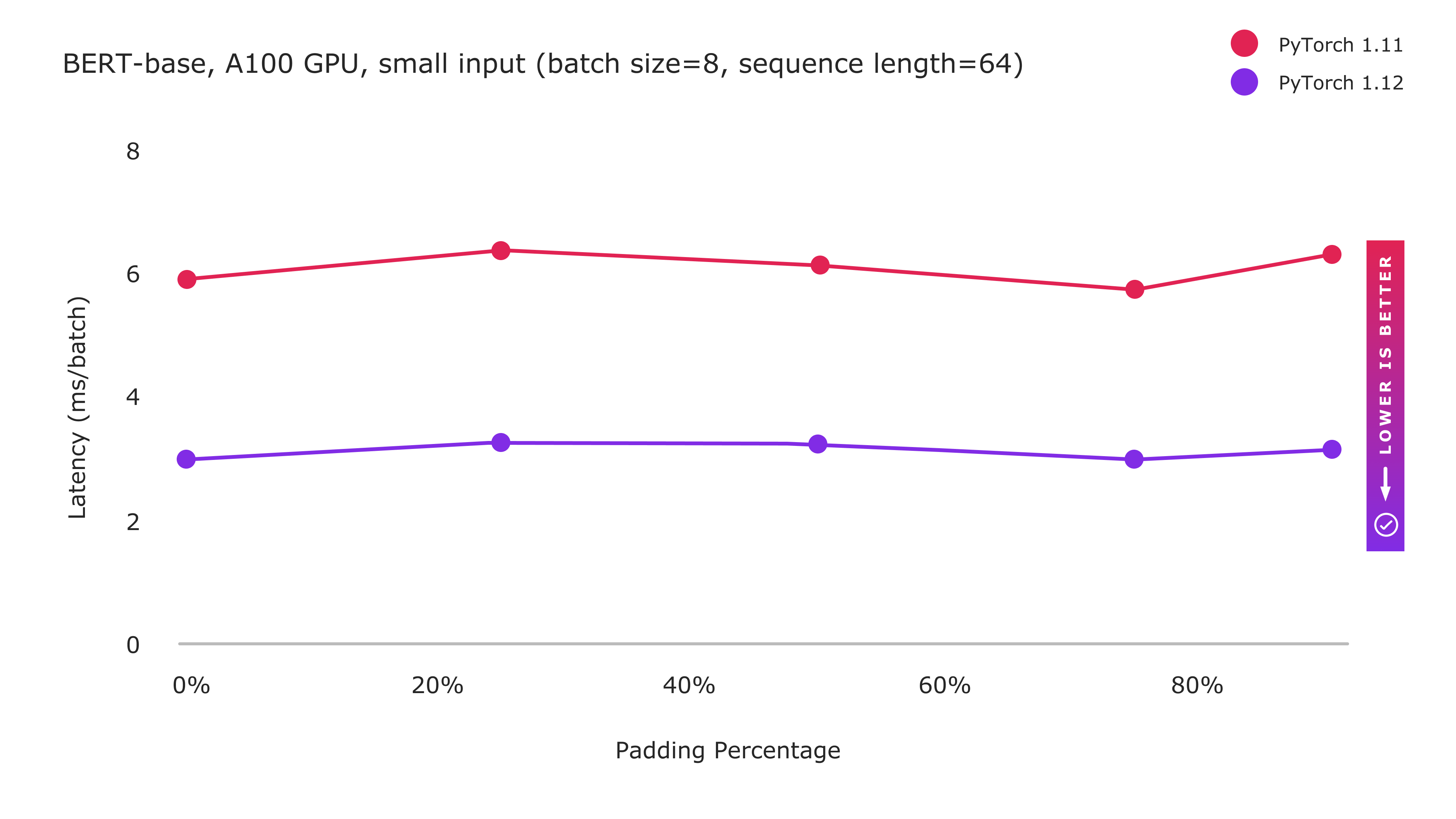

TorchServe: Increasing inference speed while improving efficiency - deployment - PyTorch Dev Discussions

Inference mode complains about inplace at torch.mean call, but I don't use inplace · Issue #70177 · pytorch/pytorch · GitHub

Inference mode throws RuntimeError for `torch.repeat_interleave()` for big tensors · Issue #75595 · pytorch/pytorch · GitHub

PyTorch on X: "4. ⚠️ Inference tensors can't be used outside InferenceMode for Autograd operations. ⚠️ Inference tensors can't be modified in-place outside InferenceMode. ✓ Simply clone the inference tensor and you're

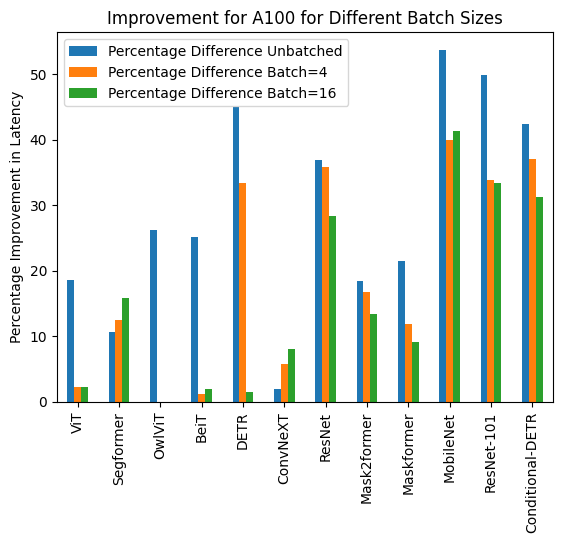

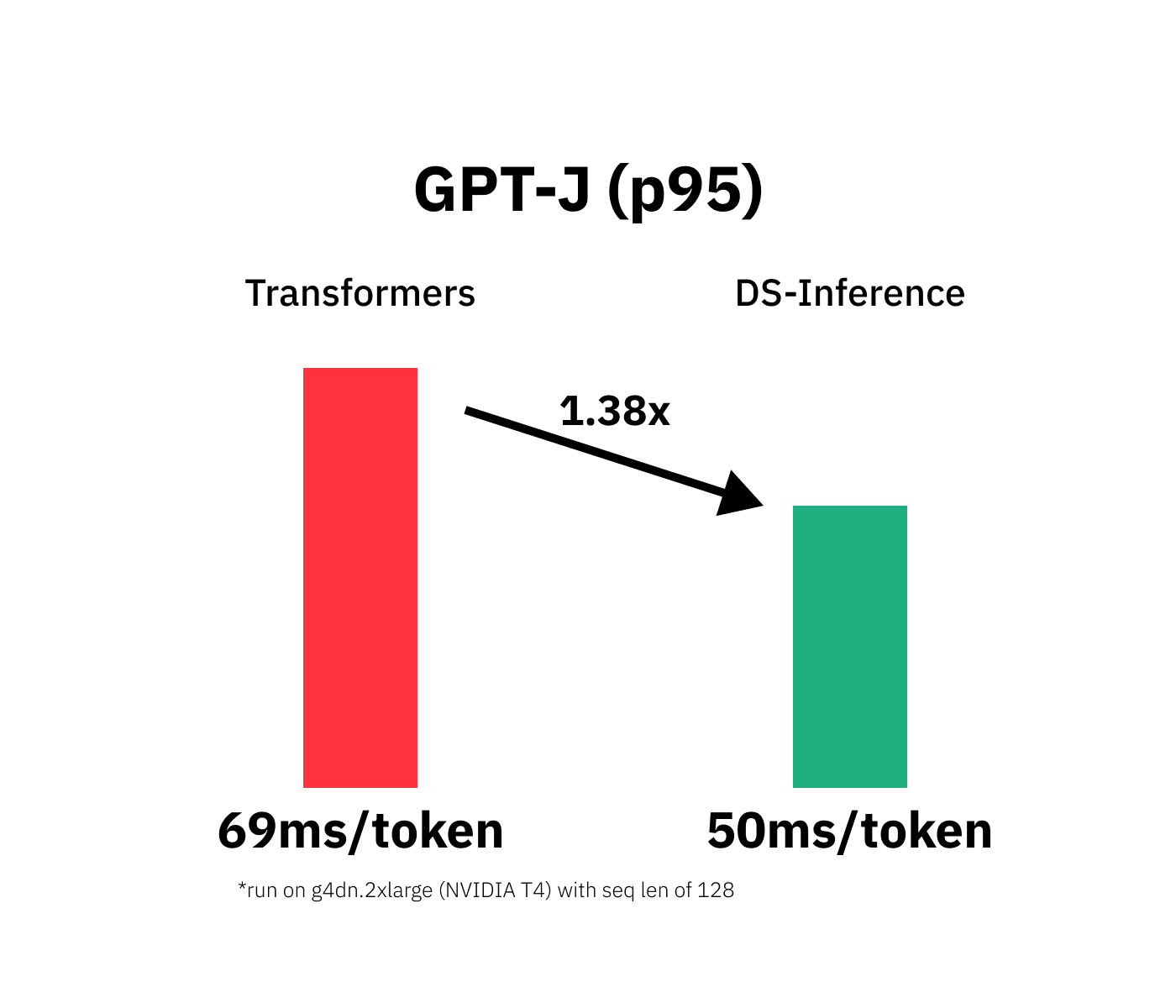

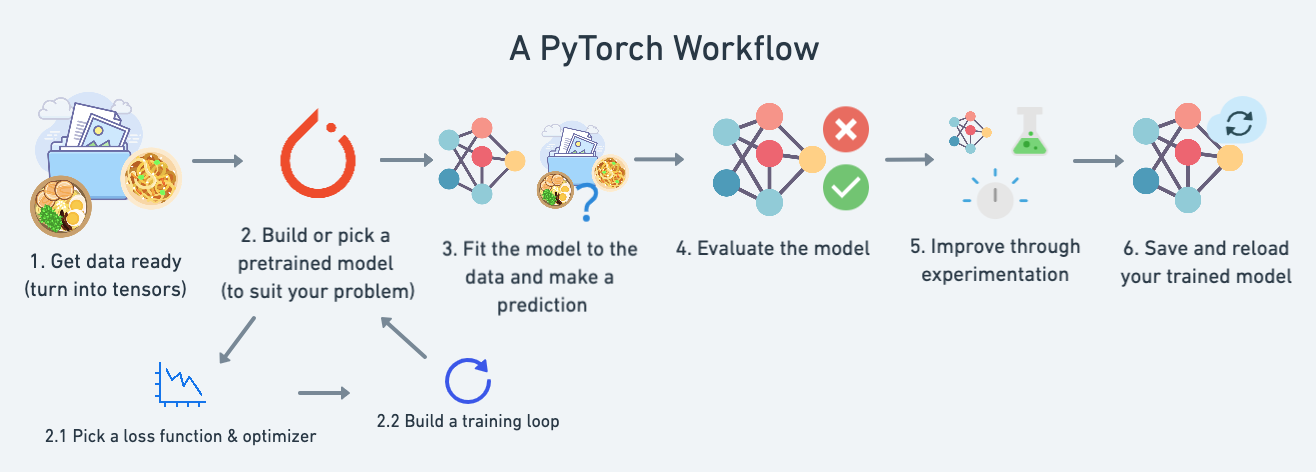

Deployment of Deep Learning models on Genesis Cloud - Deployment techniques for PyTorch models using TensorRT | Genesis Cloud Blog